What Is an AI Data Center? Meaning, Components & More

The way AI is progressing, it is all set to become a part of our daily lives. From chatbots and recommendation engines to fraud checks and language translation, AI is helping in all aspects today. However, these tools may look simple to the user, they rely on extremely heavy computing in the background. Traditional data centers were built mainly to host websites, email and business software. They use standard servers and do not always have the power or cooling needed for intense AI workloads.

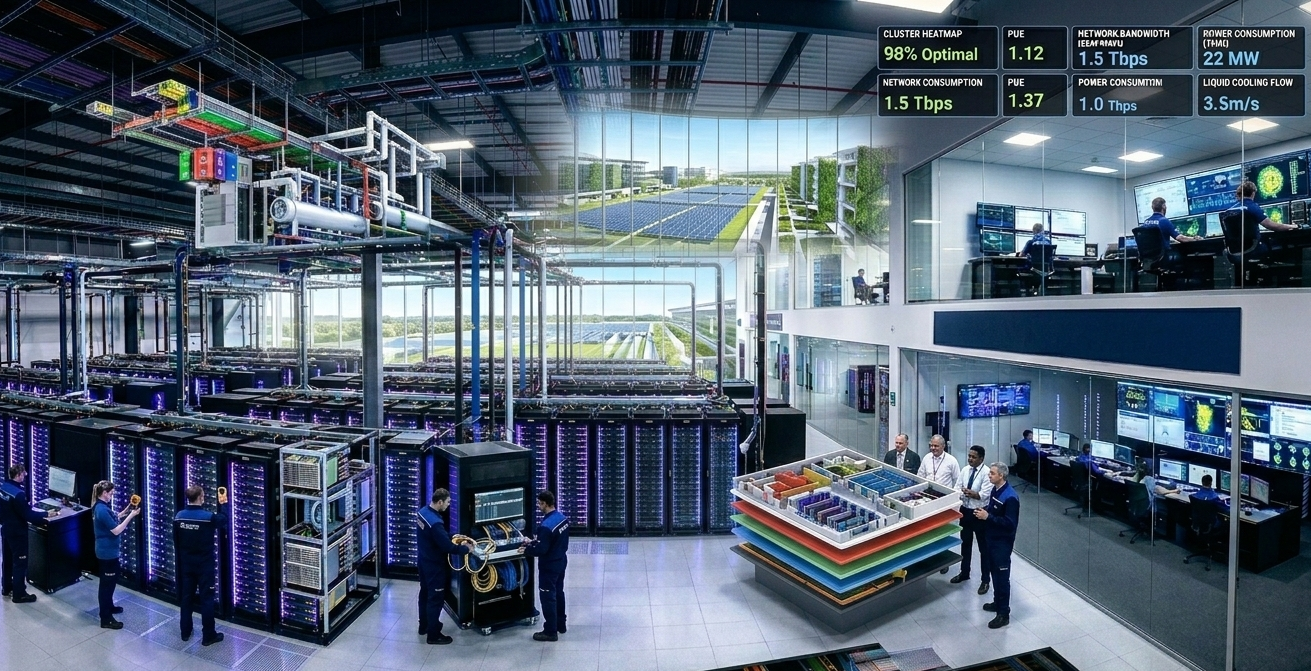

This is where AI data centers come in. So, the answer to “What is AI Data center?” is simple, and it refers to a facility that handles the requirements and demands of AI systems. It brings together high‑performance data center servers, fast storage, advanced networking and strong power and cooling systems in one place. Let’s understand more about it in detail and learn how it works, the need for special data centers, etc.

AI Data Center Definition

An AI data center refers to a data center that has been designed and equipped mainly to run AI workloads, not just general IT tasks. It can be thought of as a “factory” for AI with large amounts of data coming in. AI systems process this data on powerful hardware, and useful outputs such as predictions, recommendations or answers go back to users and applications.

Key points:

- It is a physical facility, usually a large building or campus.

- It holds high‑density racks of data center servers.

- It includes fast storage, high‑speed networking and advanced power and cooling systems.

Why AI Needs Special Data Centers?

AI needs very strong computers, so it uses special data center servers with GPUs instead of only normal office‑type servers. Moreover, AI works with huge amounts of data, and hence, it needs quick storage and very fast networks to move data without slowing down. Other reasons include:

- These powerful servers of data centers use a lot of electricity and become extremely hot. Therefore, the building needs strong power systems and advanced cooling to keep them safe.

- Also, many AI apps, like chatbots or fraud checks, must answer almost instantly, so the whole setup is designed to be fast and always on.

Main Components of an AI Data Center

Core building blocks

The core elements of an AI infrastructure can be grouped as shown below:

| Component | Description |

| Data center servers | Powerful servers with GPUs/AI chips to train and run AI models. |

| Storage systems | Fast drives to hold and read massive datasets needed for AI. |

| Network | High‑speed links that move data between servers and storage. |

| Power and Cooling | Systems that supply electricity and remove heat safely. |

| Security and Control | Tools to monitor and protect AI workloads and data. |

Note: All these AI data center components must work together smoothly. If storage or network is slow, performance would drop with the GPUs in the data center servers sitting idle.

Data Center Servers and AI Hardware

Data center servers are the main forces in an AI data center.

- They use GPUs and other AI accelerators along with CPUs to handle many calculations.

- These servers are usually grouped into clusters so that many machines can work on one AI task at the same time such as training a large language model.

- Racks are often “high‑density”, and this means they pack a lot of computing power into a small space, which increases power use and heat.

Storage And Networking

AI models and training datasets can run into terabytes or even petabytes. Hence, storage must be fast (often using SSDs/NVMe) so that data can feed the GPUs without delay. Also, the network inside the data center should offer high bandwidth and low latency so data moves easily and quickly between servers and storage.

How AI Data Centers Differ from Traditional Data Centers

There are numerous differences between traditional data centers and AI data centers, though they share many basic elements. Here are certain differences you must learn about:

| Aspect | Traditional Data Center | AI Data Center |

| Main workloads | Websites, email, business apps | AI training and inference at scale |

| Hardware focus | General‑purpose CPUs, standard servers | GPU/accelerator‑rich data center servers |

| Power per rack | Lower to medium power density | Very high power density racks |

| Cooling approach | Mostly air cooling | Advanced cooling, including liquid‑based methods |

| Network requirements | Standard data speeds | Very high bandwidth, low-latency fabrics |

Power and cooling

AI workloads draw much more power than traditional workloads because GPUs and AI accelerators consume more energy than typical CPUs. Moreover, AI data centers are built to support higher power per rack. They often use liquid cooling or advanced airflow designs to manage heat efficiently. This is required to keep hardware stable and to avoid performance drops or failures.

Types of AI Data Centers

Different organizations use AI data centers in different ways. Here are some of the common AI data center types:

| AI Data Center Type | Description |

| On-premises AI data center | Built inside the company’s own location with full control setup |

| Cloud-based AI data center | A data center that can be accessed online and helps businesses scale computing as needed. |

| Hybrid AI data center | A setup that uses both on-site and cloud AI data centers together |

| Hyperscale AI data center | Large-scale infrastructure built to handle massive AI workloads |

| Edge AI data center | A small data center placed close to users for faster processing |

| Colocation AI data center | A setup where companies place their servers in a shared data space |

Use Case: AI Customer Support in Online Shopping

Nowadays, all big online shopping companies use AI chatbots to answer customer queries. These questions are about orders, payments, returns and delivery. The chatbot works all the time and talks to many customers at once. To reply fast, the AI needs strong computers and quick access to data. An AI data center supports this by taking care of all the processing in the background. With strong power and proper cooling, the chatbot keeps working smoothly, even during the days of high customer traffic.

Conclusion

AI data centers are the backbone of today’s AI tools. They are built to handle heavy computing, large data, high power use and constant cooling needs. Unlike traditional data centers, they use powerful GPU-based servers, fast storage and strong networks to keep AI applications running smoothly and quickly. Now that more businesses have started using AI, the demand for such advanced data centers with the right AI infrastructure is set to grow.

Nxtra by Airtel plays an important role by helping organizations access facilities designed for modern AI needs. With reliable data centers and essential AI data center components such as strong power, cooling systems, secure environments and high-speed connectivity, Nxtra by Airtel makes it easier for businesses to run AI workloads without stress. It not only helps companies grow but also makes them ready for the future.

Key Takeaways

- AI data centers are built to handle heavy AI workloads.

- They use powerful hardware, fast storage and advanced cooling.

- GPUs and high-speed networks are essential for AI performance.

- Power and cooling needs are much higher than traditional setups.

- AI-ready facilities help businesses scale their AI projects smoothly.

FAQs (Frequently Asked Questions)

- Why do AI workloads need more computing power?

More computing power is needed for AI models as they process large data sets and complex calculations. This often requires stronger hardware than normal applications.

- What role does cooling play in AI environments?

When AI hardware produces a lot of heat, advanced cooling helps in keeping systems stable and running efficiently.

- What makes an AI data center different from a normal data center?

AI data centers are different from normal data centers as they use powerful GPU-based servers, faster networks and stronger cooling to handle heavy AI workloads.

Twitter

Twitter

Email

Email