How to Cool AI Enterprise Workloads (Different Cooling Methodologies)

AI enterprise workloads use powerful GPUs and CPUs, which create a lot of heat while working. Traditional cooling systems often find it hard to manage this heat. Efficient cooling is important because it keeps systems running smoothly, lowers energy costs, and supports sustainability. With this blog, learn about simple and effective data center cooling methods for AI environments, helping businesses improve performance and plan for future growth.

Why AI Needs Better Cooling

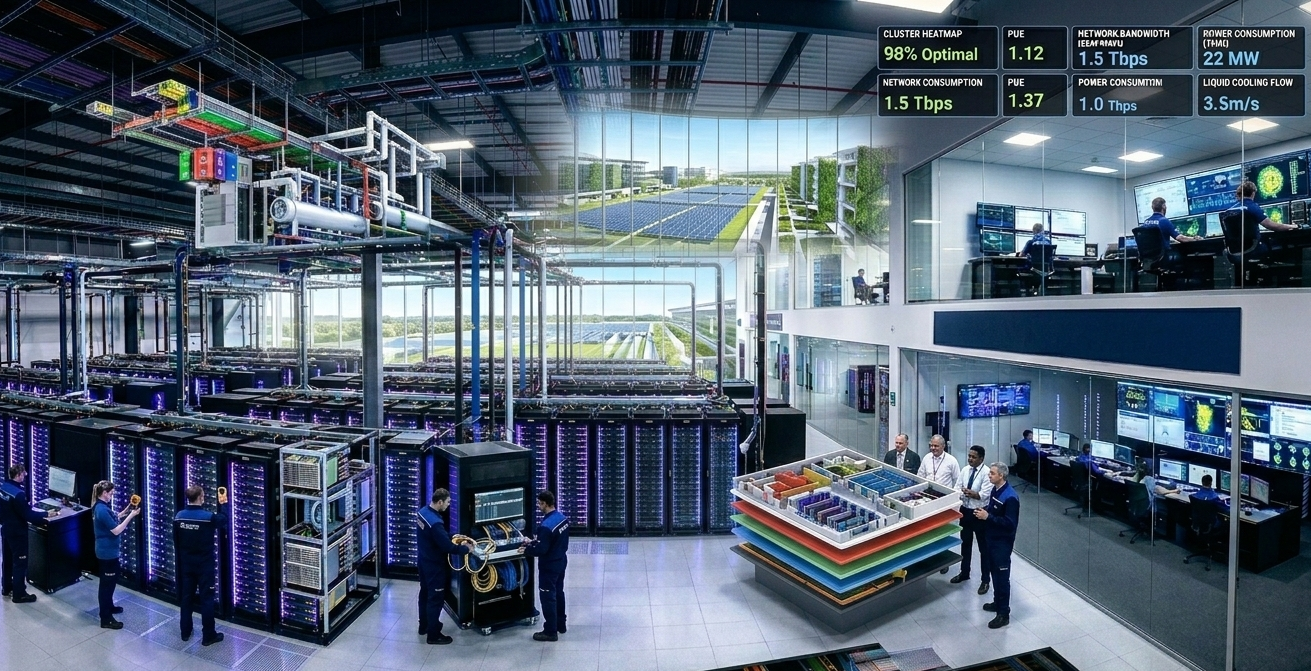

AI workloads, like training large language models or running real-time inference, use a huge amount of power. Because of this, each data center rack needs to be designed to support high power densities, typically ranging from 40 kW to more than 100 kW per rack.

Traditional air cooling systems were built for lower-density racks in the 5–20 kW range, making them inadequate for the heat generated by AI workloads. As rack densities increase, insufficient cooling can lead to overheating, reduced performance, and higher risk of hardware failures.

The challenge intensifies as AI adoption accelerates. Data centers already consume significant amounts of electricity, with cooling accounting for nearly 30–40% of total energy usage. Inefficient cooling not only increases operating costs but also makes it more difficult to meet sustainability and energy-efficiency goals.

This is why many businesses are moving toward data centers with liquid cooling capabilities. Since liquids remove heat far more efficiently than air, these solutions can support high-density AI workloads while improving overall energy efficiency.

Air Cooling Basics

Air cooling is still one of the most common data center cooling methods. In hot aisle and cold aisle configurations, cold air is supplied through the raised floor into the racks, while hot air is expelled from the rear. In cold aisle containment, the cold air corridor is enclosed whereas in hot aisle containment, the hot air corridor is enclosed.

However, with advanced AI systems and higher rack densities, air cooling begins to reach its operational limits. Cooling fans and PAHUs must work harder to manage the increased heat load, leading to higher power consumption, greater noise levels.

Direct-to-Chip Cooling

Direct-to-Chip (D2C) cooling removes heat directly at the source. In this method, cold plates are mounted onto high-performance components such as CPUs and GPUs, allowing coolant to absorb heat directly from the chips. The heated liquid is then circulated to a Coolant Distribution Unit (CDU), where the heat is removed before the cooled liquid is recirculated back into the system. In single-phase liquid cooling systems, the coolant remains in liquid form throughout the entire process.

D2C cooling is well-suited for high-density AI environments, supporting rack power densities exceeding 100 kW. By transferring heat more efficiently than air cooling, it reduces reliance on high-speed fans, lowering noise levels. This also enables greater compute density within each rack.

For AI training and high-performance computing workloads, D2C cooling delivers consistent thermal management by maintaining stable chip temperatures. This helps sustain processing performance, improve training efficiency, and enhance long-term hardware reliability.

Immersion Cooling Options

Immersion cooling uses a fundamentally different approach to thermal management. Instead of cooling specific components, entire servers are submerged in specially engineered dielectric fluids that do not conduct electricity, making them safe for electronic hardware. In single-phase immersion cooling systems, the fluid continuously circulates around the components, absorbing heat directly from the equipment.

This method is capable of supporting extremely high-density AI workloads. By reducing dependence on air-based cooling infrastructure, immersion cooling enables quieter operations and lower energy consumption.

Hybrid and Smart Systems

Hybrid systems combine different data center cooling methods in one facility.

For example, air cooling may support regular IT systems while Direct-to-Chip cooling handles powerful AI racks.

This gives businesses more flexibility. And smart technology makes these systems even better.

Sensors monitor chip temperatures, airflow, humidity, and cooling performance in real time. AI-based controls can predict heat increases and automatically adjust pumps, valves, or airflow. When connected with DCIM software, teams can find problems early and improve planning.

Picking the Right Method

| Method | Density Fit | Cost | Ease of Upgrade | Best For |

| Air Cooling | <20 kW | Low | Easy | Legacy / Low AI |

| Direct-to-Chip | 50-150 kW | Medium-High | Moderate | GPU-heavy AI |

| Immersion | 100+ kW | High | New builds | Extreme density |

| Hybrid | Varies | Flexible | Scalable | Enterprises |

Note: The best choice depends on power needs, facility design, budget, and long-term business goals. For many enterprises, hybrid systems offer the most flexible starting point.

Conclusion

Cooling AI workloads needs smarter planning as rack power keeps increasing.

From air cooling to advanced liquid cooling data center technologies, each method helps improve performance, efficiency, and sustainability in different ways.

Hybrid systems often offer the best balance because they provide flexibility, scalability, and better cost control.

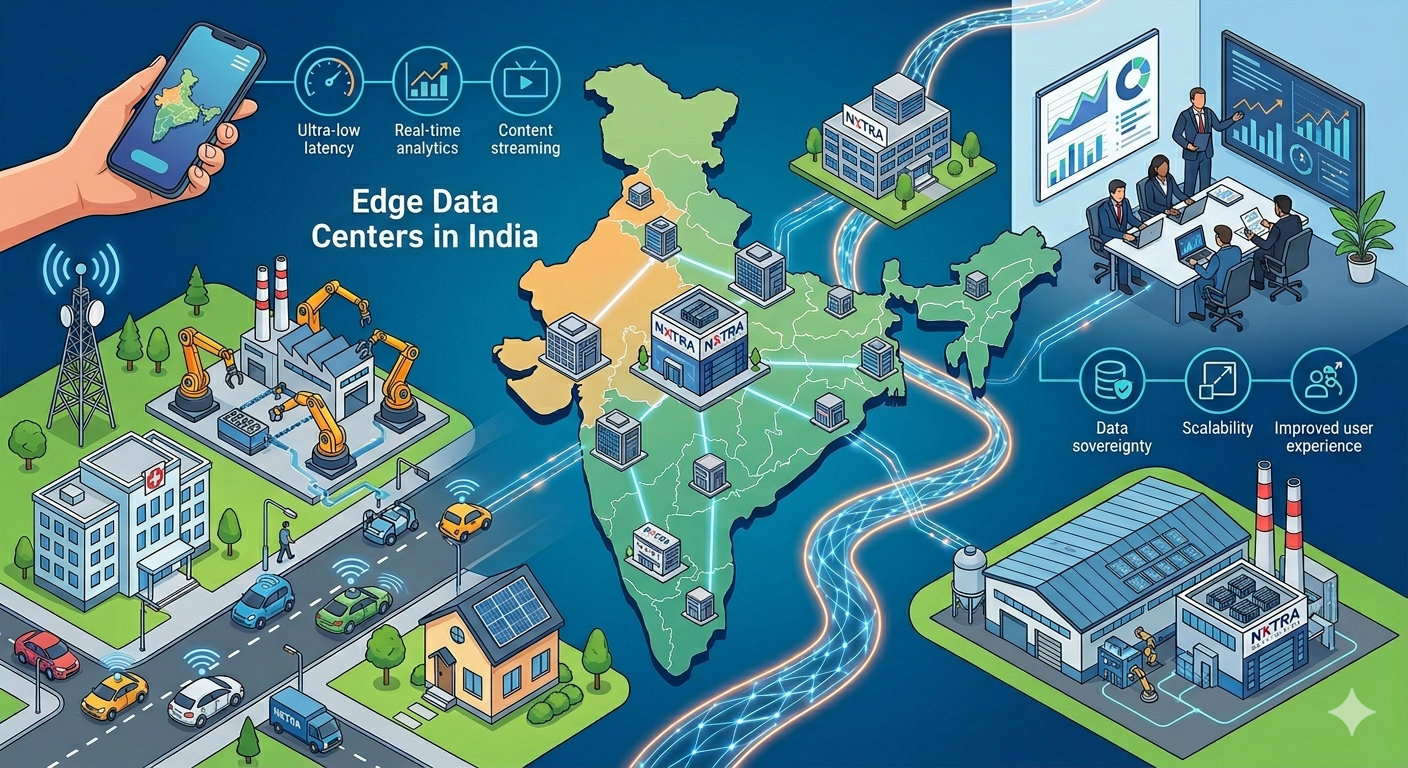

Nxtra by Airtel supports enterprises with AI-ready data centers built for high-density workloads. With advanced liquid cooling, direct-to-chip systems, and hyperscale infrastructure, Nxtra helps businesses deploy AI smoothly while preparing for future growth.

FAQs

-

AI racks generate significantly higher heat loads than traditional servers due to their dense concentration of high-performance GPUs and processors. As rack power densities increase, conventional air cooling systems struggle to remove heat efficiently. At these higher power levels, relying only on fans and airflow can lead to hotspots, overheating, reduced system performance, and increased risk of hardware failures.

-

Yes. Modern liquid cooling systems use sealed loops or special non-conductive fluids that safely remove heat from electronic hardware.

-

Yes. Better cooling systems improve energy efficiency, lower power use, and can support heat reuse for stronger sustainability.

Twitter

Twitter

Email

Email